The CLEAR Act: Transparency Is Becoming a Baseline Requirement for AI

Vered Horesh

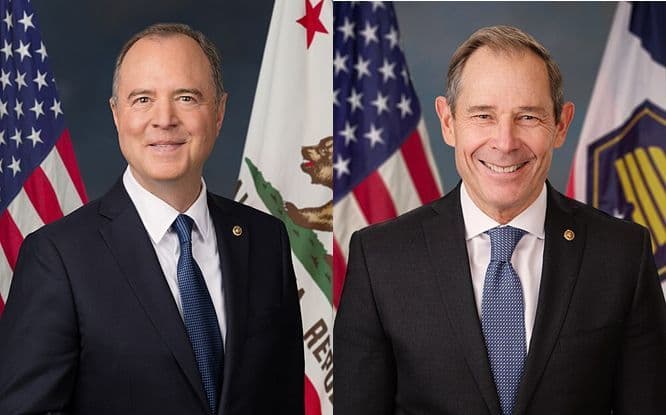

On February 10, 2026, Senators Adam Schiff (D-CA) and John Curtis (R-UT) introduced the bipartisan Copyright Labeling and Ethical AI Reporting (CLEAR) Act, aimed at introducing meaningful transparency safeguards for generative AI model development.Source: https://www.schiff.senate.gov/news/press-releases/news-sens-schiff-curtis-introduce-bipartisan-bill-to-protect-creators-work-implement-transparency-safeguards-in-ai-model-development/

This is a direct response to a core failure mode in today’s AI ecosystem: creators have had no reliable visibility into whether their work was used to train models, and no practical way to verify how it’s used. That opacity has created a widening trust gap across creators, enterprises, and the public.

At Bria AI, we chose a different path: licensed data, traceable provenance, built-in accountability.

As Vered Horesh, Bria’s Chief AI Strategy Officer, shared in support of the bill:

“Trustworthy AI cannot be built in the dark. The public deserves to know what fuels the systems shaping our culture, economy, and daily lives. The CLEAR Act shifts the burden to where it belongs, on developers, and marks a vital step toward restoring trust, fairness, and accountability in the age of AI.”

This is a meaningful step forward. Now it is time to advance it.

What the CLEAR Act is trying to fix

Generative AI has scaled faster than the transparency norms that exist in other high-impact industries. That gap creates a structural asymmetry:

- Creators cannot verify usage, so they cannot negotiate, enforce rights, or make informed choices.

- Developers carry compounding legal and reputational risk, because unverifiable sourcing is not a sustainable operating model.

- Enterprises cannot procure responsibly, because permissions and sourcing are often unclear.

The CLEAR Act would introduce notice and reporting obligations intended to make model training inputs more transparent, with specifics defined in the bill text.

Even as details evolve through the legislative process, the direction is clear: transparency becomes a duty, not a marketing choice.

Why disclosure is the missing infrastructure layer

This is not only a fairness debate. It is a market-structure problem.

You cannot build licensing markets, partnership markets, or responsible procurement on unverifiable claims. Opacity creates friction that taxes the entire ecosystem:

- It blocks licensing at scale. Without traceability, licensing stays fragmented and hard to standardize.

- It slows adoption. Enterprises add layers of review and restrictions because they lack proof of sourcing.

- It undermines legitimacy. The credibility gap compounds, and the cost of trust keeps rising.

Disclosure is the prerequisite for sustainable collaboration between developers, creators, and the companies deploying AI in production.

Bria’s approach: build trust into the model, not around it

Bria’s strategy has been consistent: start with clean inputs and make provenance verifiable.

That means:

- training on licensed data, not scraped corpora

- building systems that support traceability and provenance

- treating accountability as a product requirement, not a communications posture

Legislation like the CLEAR Act matters because it reinforces what responsible builders already know: provenance is foundational infrastructure for trustworthy AI.

A signal that matters: broad creator and industry support

The CLEAR Act is not backed by a single constituency. Senator Schiff’s office published a “What they’re saying” compilation highlighting support from more than 25 groups representing creators and artists.

For anyone still treating transparency as fringe or anti-innovation, this is a reality check. The demand for disclosure is mainstream across the creative economy.

What should happen next

Introducing a bill is not the same as passing it, and passing it is not the same as implementing it well.

To translate the CLEAR Act into durable impact:

- Creators and rightsholders should stay engaged while “meaningful transparency” is being defined.

- Responsible developers should support disclosure publicly. If you have clean inputs and provenance, transparency is a competitive advantage.

- Enterprises should demand traceability from vendors now. Procurement pressure often moves markets faster than regulation.

Standards and tooling should move in parallel so compliance becomes consistent and scalable.

Closing

The CLEAR Act reflects a basic reality: AI is becoming infrastructure, and infrastructure cannot be governed by secrecy.

This is a meaningful step forward. Now it is time to advance it.