From skeptic to founding artist: how Asaf Hanuka trained an AI on his own work

Bria ai

A field guide to curating, training, and directing your own style model – drawn from Asaf Hanuka's live Artfair masterclass.

Published in collaboration with Asaf Hanuka.

For most professional illustrators, the last three years of generative AI have felt less like a technology story and more like a dispossession story. Models trained on billions of scraped images learned to imitate working artists' styles without permission, without credit, and without a dollar of revenue flowing back to the source.

Asaf Hanuka watched it happen. Then he did something most of his peers didn't: he trained an AI on his own work, on a platform he helped design.

Today Asaf is a Founding Artist of Artfair by Bria and an Artist Advocate for the program. In a recent live masterclass, he walked illustrators through the full pipeline – how to curate a dataset from your own portfolio, train a personal style model, direct it at generation time, and route its commercial use back to revenue you earn while you sleep.

This post is a condensed field guide to that session: the method, the mindset, and the reason it works.

The state of play for professional illustrators

The generative AI industry grew up on an assumption that copyrighted work was training material. Working artists got two bad choices: be scrapped and imitated without consent, or opt out of AI entirely and watch clients drift toward tools that don't respect their craft.

Neither option is a plan. Opting out doesn't pause the market. Being scraped doesn't pay rent.

What's missing is a third path: a way for professional illustrators to participate in AI on terms they actually set. That is the problem Artfair was built to solve.

Asaf's take: from threat to leverage

"I would love to get money while I sleep, and someone else generates my work. It is like an agent, only you don't do the work." - Asaf Hanuka

That line captures the shift. When the model is yours, trained on your work, under your license, with your revenue share, AI stops being a threat to your practice and starts being a multiplier of it. You still do the hard creative work. The model handles the scale you couldn't reach alone.

Artists on Artfair earn 70% of revenue generated through their model. The training data is licensed, not scraped. Attribution travels with every output. Clients are enterprise only.

The four-stage method

Asaf's masterclass breaks down into four stages: curation, training, inference, and workflows. Here is the practical version.

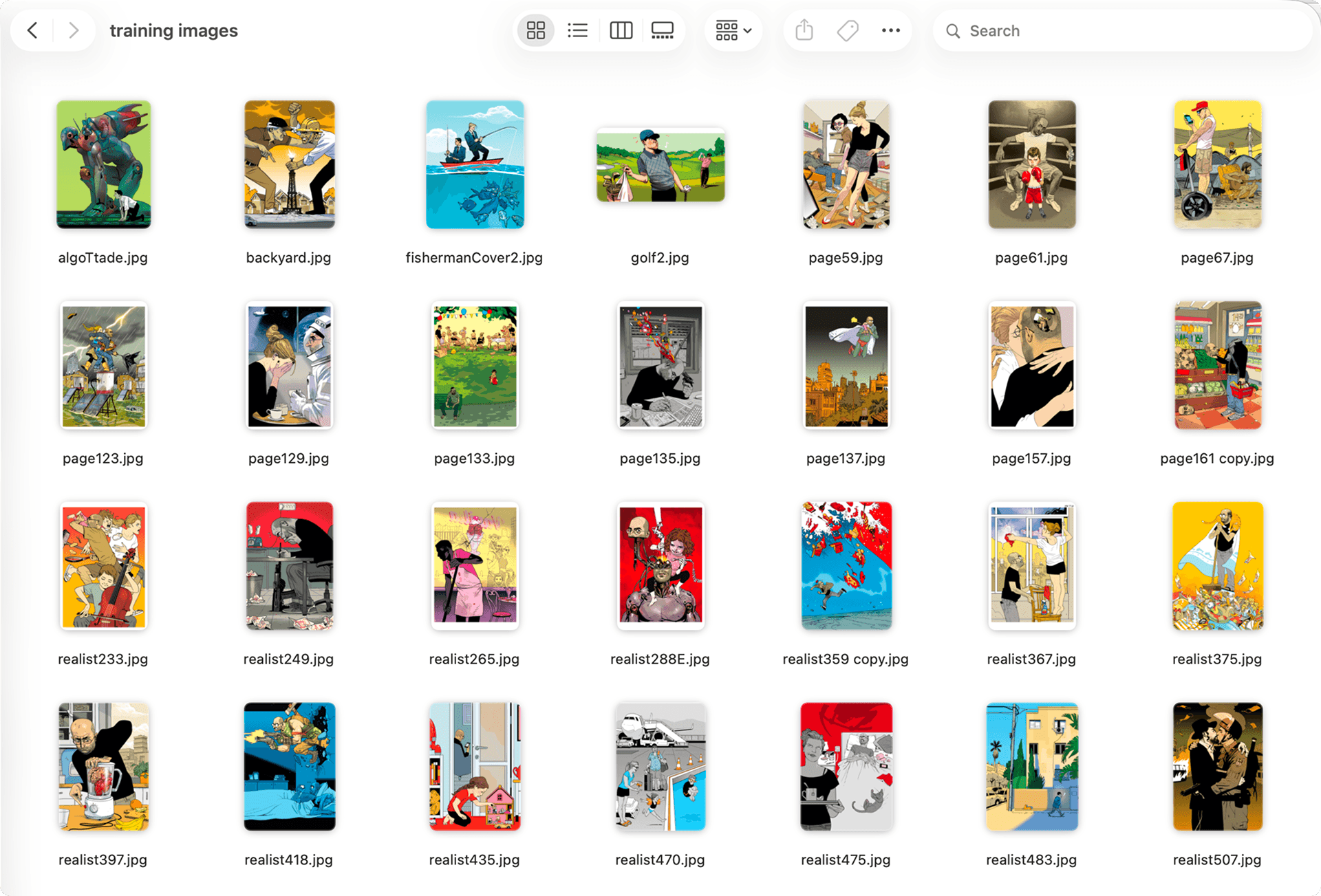

1. Curate a focused dataset (20–30 images)

More is not better. A focused, coherent set outperforms a large noisy one every time.

The rule is series, not portfolio. Choose images that share a visual DNA: related palette, similar line weight, the same compositional energy. Brothers and cousins, not strangers.

Technical minimum: 1,500px on the short side, high-resolution originals. The system auto-captions each image on upload.

2. Train your model

In Bria Create's workspace, create a project, a folder that holds your style models, then pick a training type. Stylized Scene is the default for most illustrators. Other options cover defined characters, character variants, icons, object variants, and multi-object sets. Pick based on the use case, not instinct.

Upload your curated set. Processing takes about a minute.

Then write your Visual DNA – a short text baked into your model at training time. Every generation is filtered through it first. The system auto-generates a draft, always rewrites it.

The auto-draft describes subjects, not style. When Asaf uploaded his work, the system wrote about "a bald man with glasses in states of surprise." Bake that in and every image trends toward that figure, which is not what a general editorial model should do.

Describe formal properties instead:

- Composition – symmetry, layering, negative space, figure-ground

- Color palette – saturation, warmth, hue family, contrast

- Line quality – bold outlines, thin detail, variable weight, edge treatment

- Rendering – cell shading, flat fills, watercolor texture, crosshatching

Keep the Visual DNA open and general so it does not limit you. Add specificity later at the prompt level. Once training starts, the Visual DNA is baked in permanently, so get it right before you press Train.

Training typically runs a few hours. You will receive an email when the model is ready in Bria Create.

3. Generate with direction, not luck

Select your trained model from the dropdown in Bria Create. The tab system lets you run multiple prompts or aspect ratios in parallel, compare results, and close what you do not need. Everything saves to history.

You have two ways to direct your model: chat prompts and VGL. Both work on the same model. They do different jobs.

Start in chat for exploratory moves. Three prompt registers consistently land:

- Composition keywords: "symmetrical," "vintage poster composition," "panoramic," "full bleed”

- Rendering keywords: "flat color palette," "elegant cell shades," "bold outlines," "graphic silhouette"

- Influence injections: "think like a French comic book," "vintage travel poster" - your style stays the foundation

Prompts are the fast path. They are also a translation. You describe what you want in English, the model interprets, and some of your intent gets lost on the way. That is where VGL comes in.

VGL (Visual Generation Language) is the structured language Bria's models, and general agents natively speak. Every image you generate already has a VGL description underneath it: the composition, the lighting, the camera, the aesthetic, every object and how each one is rendered. VGL makes that description editable. No translation. No prompt lottery. Change one parameter and only that parameter changes, while the rest of the image holds.

That matters for two reasons working illustrators care about:

- Precision. Edit the color of one specific object without the whole image shifting. Swap the style medium from illustration to photograph. Change the camera angle without redrawing anything else.

- Consistency. The same inputs produce the same outputs. If a client signs off on an image today, you can regenerate it, tweak it, or produce a matching variant next month and it behaves predictably.

When to reach for which:

- Use chat for broad moves. A new color palette across the whole image, adding or removing a major element, an overall mood shift.

- Use VGL for surgical moves. The color of one specific object, a style-medium switch, precise camera or lighting changes.

Prompts are how you find the image. VGL is how you finish it.

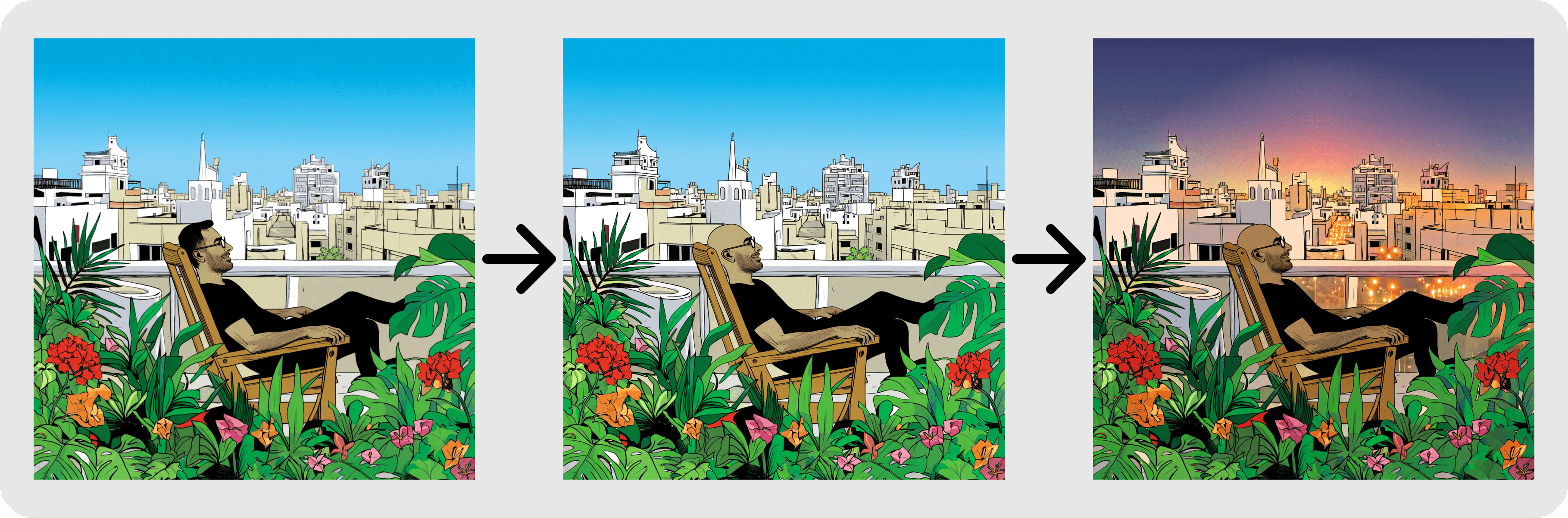

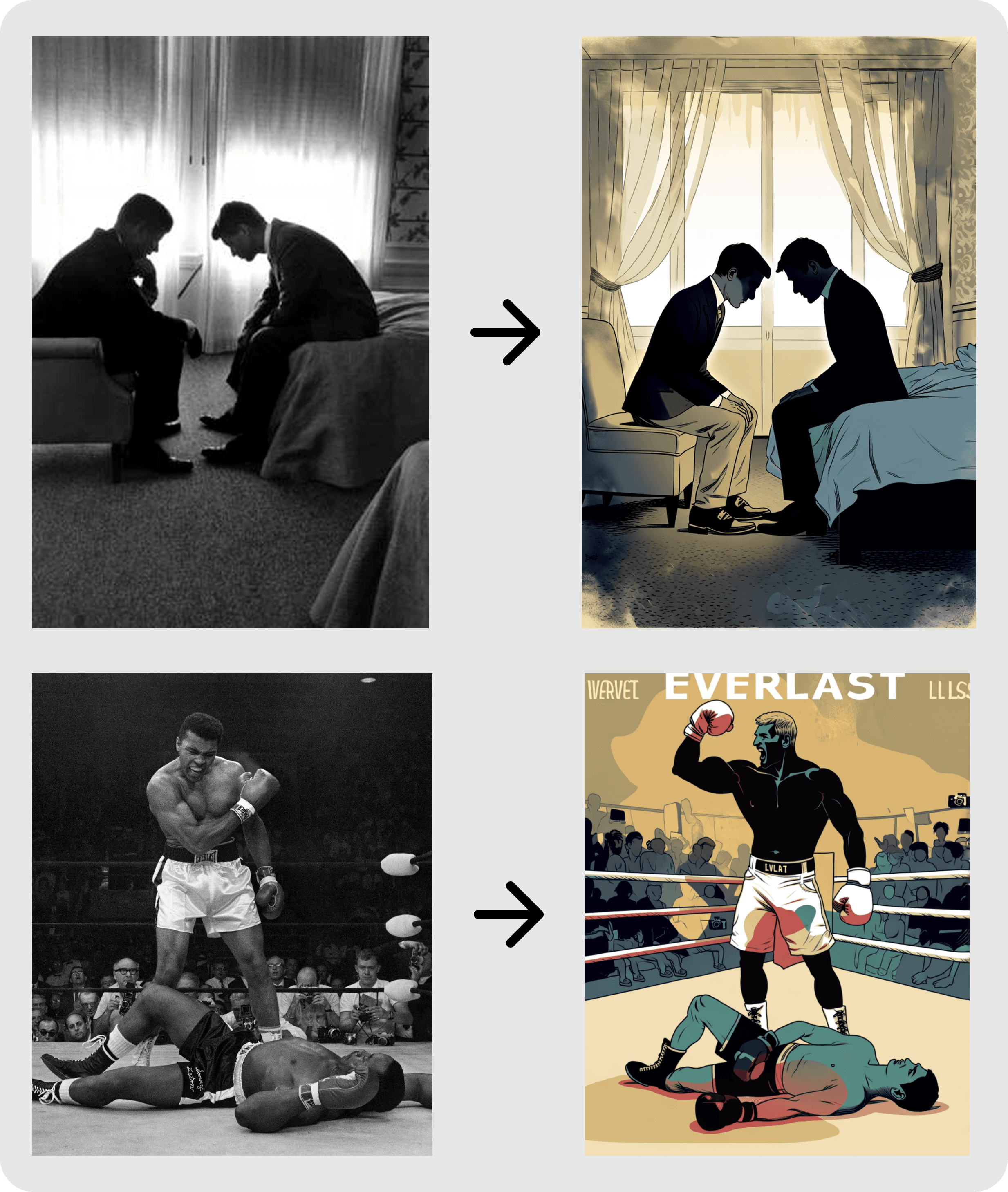

4. Build real workflows

Three workflows Asaf uses in production:

- Illustration to photograph. Upload a finished illustration. In VGL → Aesthetics, change the style medium to "photograph." Use the result as a reference for anatomy, lighting, and shadow behavior.

- Mood board to illustration. Upload a photo with a composition or emotional quality you want to explore. Add a prompt describing the kind of illustration you imagine. The model treats the photo as structural and emotional reference, not a copy template.

- Character consistency for book work. Train a dedicated model per character using 20 images across angles and expressions. When you generate with that model, the character stays consistent without describing them in every prompt.

Where the commercial layer lives

Bria Create is where you work. Artfair is where your trained model meets enterprise clients, and where the revenue routes back to you.

Two commercial categories open up once your model is licensable:

- Scale projects. A retailer needs 100,000 personalized holiday cards. No illustrator can produce that volume by hand. With your licensed model, the work gets made in your style and you earn on every generation.

- Brand interactions. Brands invite audiences to create personalized versions of products using your style, a commercial category that did not exist before generative AI.

Revenue share is 70% to the artist. Clients are enterprise only. Attribution and provenance are built in.

Why Bria, specifically

Most AI platforms treat licensing as a legal problem to manage. Bria treats it as the foundation the system is built on.

- Rights-clear by construction. The foundation models are trained on 100% licensed, opt-in, compensated content. That is why brands can put outputs into commercial use without exposure, and why artists show up in the first place.

- Control, not prompt luck. VGL gives you explicit, reproducible control over composition, lighting, style, and camera. Same inputs, same outputs. Your style stays your style.

- A commercial surface, not just a model. Artfair turns a trained model into a licensable asset with attribution, enterprise clients, and a 70% revenue share routed back to the artist.

Same data flywheel as the rest of the industry. Different ethics, and a different economic contract with the people whose work makes it possible.

Start here

Artists: If you want to train a model on your own work and put it in front of enterprise clients, apply to Artfair.

Everyone else: Bria Create is launching soon. Join the waitlist to be first in when it opens.