AI Building Advanced AI Solutions:

The Technology Behind Our Developer Toolkit

Our technology suite is designed for developers, product builders, and AI engineers, providing the essential components to create, train, and deploy cutting-edge AI-powered products. From APIs and SDKs to robust foundation models, our platform covers all aspects of AI development.

Here’s a Detailed Look at the Core Technologies Driving Our Products

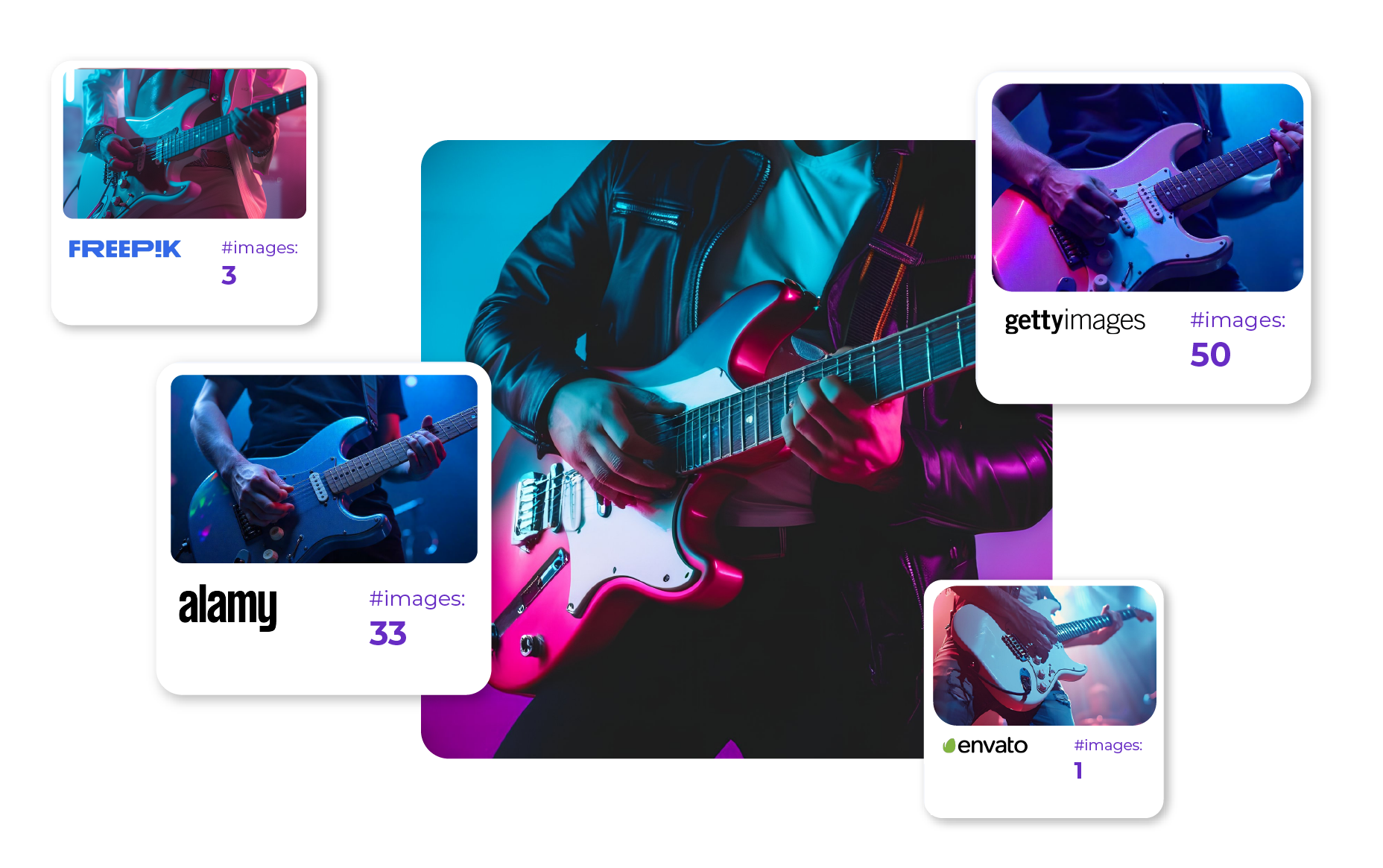

Multi-Modal Attribution Engine

The Multi-Modal Attribution Engine is a groundbreaking and patented tool that ensures artist equity by accurately attributing credit to every piece of content used to create model inferences. This technology tracks and assigns value to different assets, ensuring that creators receive proper recognition and compensation.

Data Training Platform

Our Data Training Platform is a custom-built solution designed to train models specifically for content creation. It handles the entire training lifecycle, from data ingestion and preprocessing to model training and validation, ensuring that models are trained with high-quality, diverse data for optimal performance.

Cloud Server Agnostic Infrastructure

Our Cloud Server Agnostic Infrastructure allows you to deploy AI models anywhere—whether on AWS, Azure, Google Cloud, or your on-premise servers. This infrastructure provides flexibility and scalability, making it easy to integrate AI capabilities into your existing systems without being locked into a single cloud provider.

Text-to-Image Foundation Models

The Text-to-Image Foundation Models form the core of our image generation capabilities, enabling users to convert textual prompts into high-quality images. These pre-trained models serve as the backbone for creating visuals that are accurate, detailed, and aligned with user-defined inputs.

Data Baking: Pipelines, Analysis, Loaders, Captioning

Data Baking encompasses the entire process of preparing and processing data for training AI models. It includes building data pipelines, performing data analysis, loading datasets efficiently, and generating accurate captions for training. This comprehensive approach ensures that the data fed into models is clean, structured, and relevant.

Model Benchmarking & Evaluation

Our Model Benchmarking & Evaluation system is used to assess and measure the performance of each AI model’s inference capabilities. This process ensures that models meet quality standards and perform consistently across different use cases, providing reliable outputs for end-users.

Why These Technologies Matter for Developers and AI Engineers

By integrating these technologies into our developer toolkit, we provide the foundational elements necessary to create robust, scalable, and adaptable AI-powered applications. Whether you’re building tools for content creation, deploying models across cloud infrastructures, or ensuring fair attribution for creative assets, our technology suite delivers the flexibility, performance, and reliability needed to develop and launch AI solutions at scale.